A DORA Community Engagement Grants Report

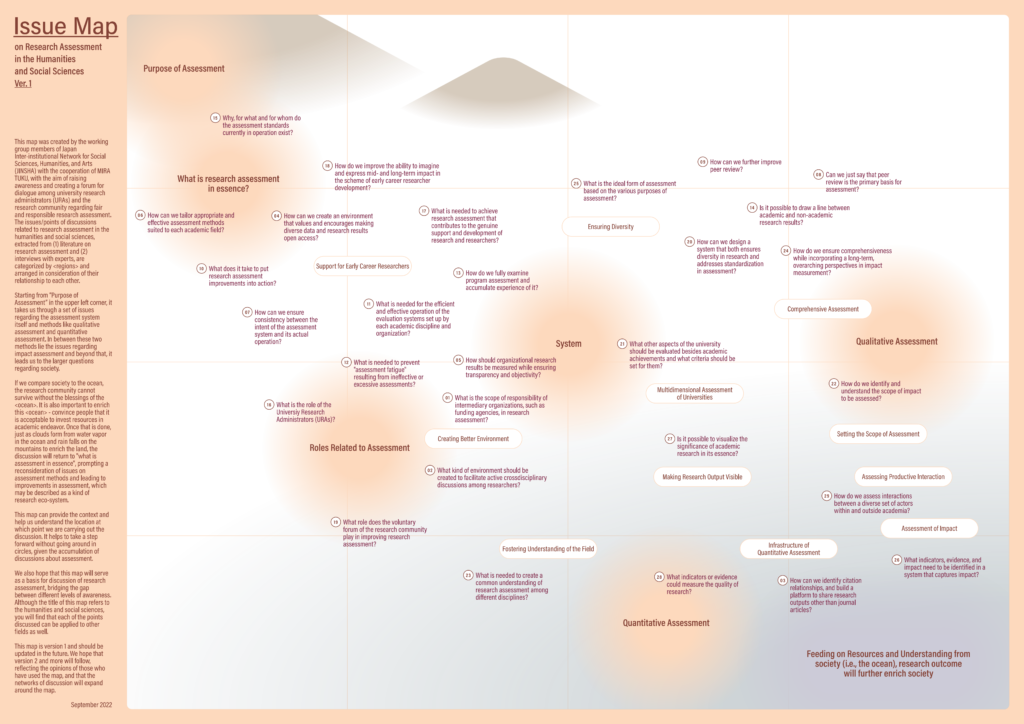

In November 2021, DORA announced that we were piloting a new Community Engagement Grants: Supporting Academic Assessment Reform program with the goal to build on the momentum of the declaration and provide resources to advance fair and responsible academic assessment. In 2022, the DORA Community Engagement Grants supported 10 project proposals. The results of the Creating a Platform for Dialogues on Responsible Research Assessment: The Issue Map on Research Assessment of Humanities and Social Sciences project are outlined below.

By Futaba Fujikawa and Yu Sasaki — Kyoto University Research Administration Office (Japan)

Background and Purpose of the Project

Japan is a late comer to the debate on Responsible Research Assessment (RRA). Traditionally, institutional assessments of the research organizations have primarily been based on peer-review exercises. This is now rapidly changing. Policy makers have begun to perceive that Japanese research capability is in decline based on the reduced growth rate of journal publications by Japanese scholars. These perceptions about a decline have resulted in the increased emphasis on metric-based approaches.

It is in this context that the Science Council of Japan issued a recommendation that raised fresh questions about the use of metrics, especially for determining procedures for resource allocation. These concerns about metrics, assessments and goal setting have understandably seen an increased interest in the various issues linked to research evaluation. Gradually but steadily, the RRA agenda is gaining support. In September 2021 out of the more than 2,600 organizations that signed DORA, Japanese signers were only three. After a year, the number increased to ten. But there are still no signatories from universities or research funding agencies.

The Japan Inter-institutional Network for Social Sciences, Humanities and Arts (JINSHA), has taken the lead in incubating discussions on research evaluation exercises in the social sciences and humanities (SSH) research since 2014. More recently, the responsible metrics and the RRA have been key instrumental ideas that the network had taken up in various seminars and workshops to create a forum for continuous discussions and dialogues.

Building on the ongoing discussions and information accumulated so far, how can we move forward to the practice of RRA? To take up this challenge, we have decided to create a visually appealing “map” of the key issues and information regarding research assessment. Our aim is to build common grounds to discuss how far we can develop credible assessment exercises, while avoiding the repetition of the same arguments. By addressing gaps in existing knowledge and awareness on research assessment issues, we also intend to encourage University Research Administrators (URAs) and stakeholders to discuss how practical measures can be adopted to enable the implementation of RRA.

Project Process

The project was implemented through the following process:

Planning

In early June 2022, we held a kick-off meeting with the working group members from the JINSHA network and a meeting with the project members of nonprofit organization MIRA TUKU to discuss our purpose and necessary steps forward. The members of MIRA TUKU, who are involved in various co-creation projects, continued to collaborate with us throughout the project.

Interviews

In preparation for the mapping, we interviewed the following experts for the purpose of securing in advance knowledge and basic information that is not available in the literature regarding the assessment of the HSS research.

- Shota FUJII, Associate Professor, Social Solution Initiative, Osaka University

- Makoto GOTO, Associate Professor, National Museum of Japanese History

- Ryuma SHINEHA, Associate Professor, Research Center on Ethical, Legal, and Social Issues, Osaka University

Literature Listing

The WG members worked together to compile a list of approximately 65 references and resources on the assessment of the HSS research and related topics. From those 65, the WG members voted on their recommendations and comments and narrowed the list down to 18 references.

Extracting the Issues

The project members of MIRA TUKU extracted 90 issues/discussion points from 18 selected literature and 3 interviews. The 90 issues were grouped and mapped with tentative axes.

Workshop

Based on the issues identified above and a tentative mapping, discussions were further deepened at the 14th JINSHA Meeting “The Series on Responsible Research Assessment – Creating a ‘Map’ to Move One Step Forward” (held online on July 28) with three discussion groups:

1: Visualization of research output of the HSS research

Starting from such issues as “What indicators and evidence can measure the quality of the humanities and social sciences?”, the group 1 explored what URAs can do to ensure RRA from the researchers’ standpoints. The team members of this group brought up the points including:

- Each researcher should be able to talk about the meaning of his or her research, and URAs should be able to draw it out from the researchers. If we do not continue this effort, we will not be able to get on the foundation of research assessment let alone visualization.

- Although there is no sufficient database, something tangible and quantitative assessment is unavoidable in order to communicate with natural sciences.

- The humanities and social sciences are already accepted by society. In terms of understanding what people find valuable, a marketing perspective is necessary for visualization, and this is where URAs can play a role.

2: Meeting Quantitative Assessment Needs

This group explored the questions including: Even with the diversity of the HSS research, how should URAs deal with the need for institutional and individual quantitative assessment?

- If an assessment indicator for a particular program is set as the number of Top 10% papers, we have no choice but to follow it. But it is also possible to simultaneously demonstrate other qualitative achievement that contributed to the originally set goal of the program. To do so, it is necessary to refine our methods to effectively visualize results so that they can be presented when it is necessary. URAs can play an active role in this area.

- It would be good if the map could be used as a tool to have the same mindset when discussing responses to specific programs. Also, when we “lose sight” (when we are sweating to make our performance against an indicator look good), we can go back and ask what the limitations of the data are, what is the original purpose, and what is RRA. The map could be used as a reminder.

3: How to make a map

This group considered the map itself from an overarching perspective, and how it could help us connect the discussions and information accumulated to date to RRA practices.

- When we try to deal with an issue, there may be some peripheral issues that become barriers. If we can add those peripheral issues in the map as well, it would help focus on issues that needs to be solved.

- When actors in different positions (policy makers, funding agencies, university executives, researchers, and URAs) talk, they often do not engage in discussions. It would be good if a map could help them share the issues and to foster common understanding.

After discussions at the workshop, it became clearer what kind of map we should make:

- Since issues involving research assessment are complex, the map will help locate the topics and understand which positions the discussants are taking.

- The map can also be used as common grounds to enable discussion by visualizing various assumptions and scattered information.

- It would be ideal to see expansion of a network that can meet for the purpose of upgrading the map and regularly confirm which part of the map has progressed.

Outcome: Issue Map on Research Assessment in the Humanities and Social Sciences

Based on the ideas on the map, we created the final map. The 29 issues are divided as “regions” and placed in relation to each other. Starting from “Purpose of Assessment” in the upper left corner, it takes us through a set of issues regarding the assessment system itself and methods like qualitative assessment and quantitative assessment. In between these two methods lie the issues regarding impact assessment and beyond that, it leads us to the larger questions regarding society.

If we compare society to the ocean, the research community cannot survive without the blessings of the “ocean”. It is also important to enrich this “ocean” – convince people that it is acceptable to invest resources in academic endeavor. Once that is done, just as clouds form from water vapor in the ocean and rain falls on the mountains to enrich the land, the discussion will return to “what is assessment in essence”, prompting a reconsideration of issues on assessment methods and leading to improvements in assessment, which may be described as a kind of research eco-system.

Future Prospects

As described above, a series of processes to create the map were carried out in a cooperative team across institutions and affiliations, with URAs playing a central role. In a situation where there is still a long way to go in implementing RRA due to various factors, we aimed to create a map that can be used for practical purposes, rather than simply organizing past discussions, by bringing together the strengths of diverse actors and deepening discussions as we approached the project. The know-how of MIRA TUKU for structuring and effectively visualizing information, the knowledge of the experts, and the many dialogues that took place during the course of this project, all contributed to the creation of this map.

We hope that this map will show multiple paths to go beyond the issues, and that the network of discussion will continue to expand with the map as Ver. 2 and Ver. 3 are developed, reflecting the opinions of those who have used the map.

Resources

Recommendation ‘Toward Research Evaluation for the Advancement of Science: Challenges and Prospects for Desirable Research Evaluation’ (https://www.scj.go.jp/ja/info/kohyo/pdf/kohyo-25-t312-1en.pdf) Subcommittee on Research Evaluation, Committee for Scientific Community, Science Council of Japan