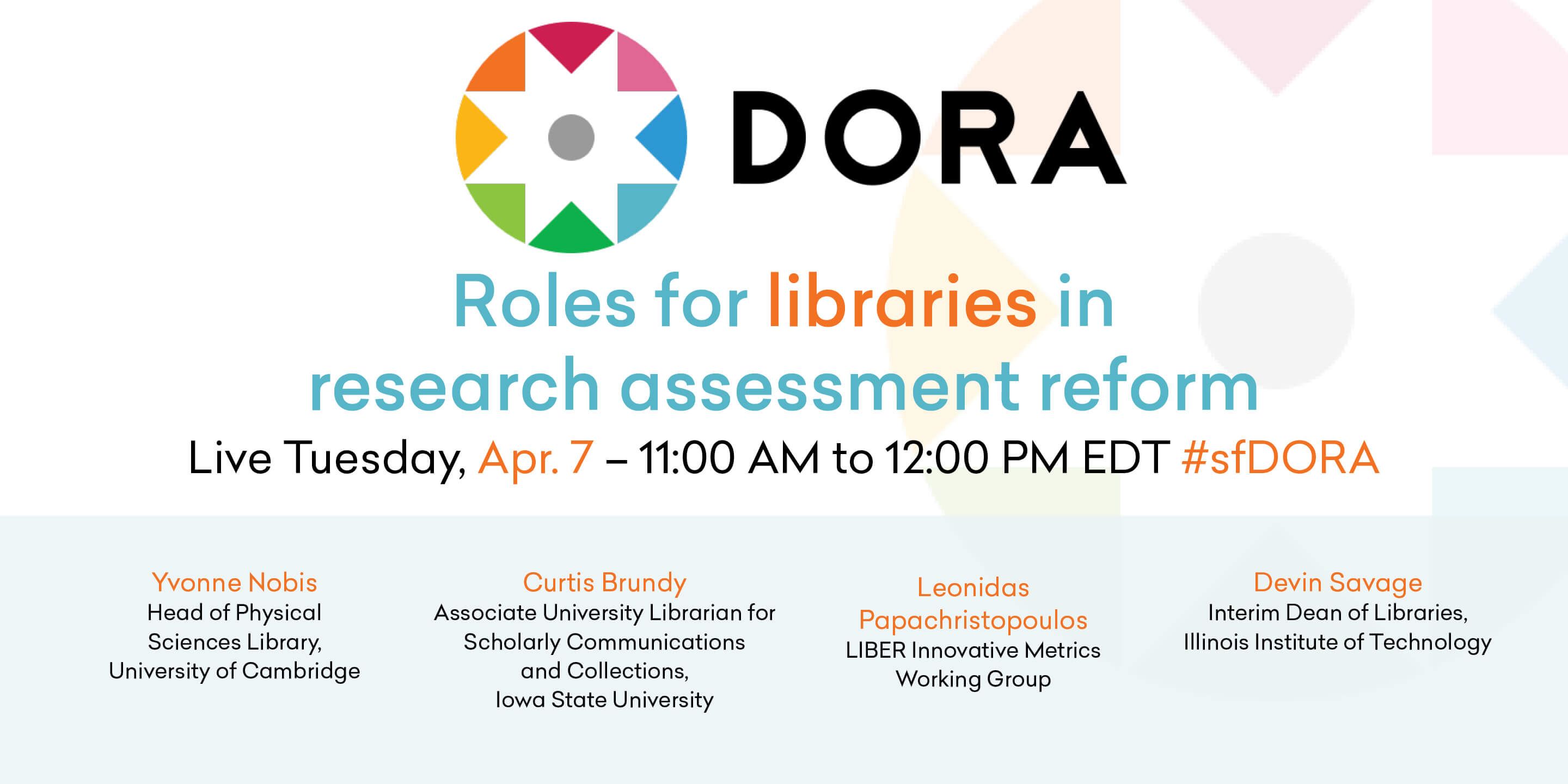

On Tuesday, April 7, 2020, more than 180 individuals around the world joined DORA’s webinar discussion to examine what roles libraries play in research assessment reform. Panelists Curtis Brundy (Iowa State University), Yvonne Nobis (University of Cambridge), Leonidas Papachristopoulos (Hellenic Open University Distance Library and the Association of European Research Libraries), and Devin Savage (Illinois Institute of Technology) discussed their experiences as librarians working to improve how research is evaluated on campus.

Source of bibliometric expertise

Librarians are experts in bibliometrics, says Nobis. So educating academics about responsible metrics is an obvious role. The panelists agree that it is not a library’s responsibility to tell disciplines how to assess the quality of their research; rather the responsibility lies in showing the limitations of different indicators. To help, the Innovative Metrics Working Group for the Association of European Research Libraries (LIBER) published recommendations for librarians about scholarly indicators that cover four axes: discoverability, showcasing achievements, service development, and research assessment. Papachristopoulos also recommends offering education on metadata and repositories.

In Nobis’s experience at Cambridge, the Math and Physics departments were the first to call for change, because the researchers saw the system was inequitable. The use of traditional indicators in research assessment limits the type of acceptable research outputs to peer-reviewed journal articles. This presents a challenge for disciplines that produce other types of research output. For example, Nobis noted an instance where a researcher’s preprint had more citations than the final peer-reviewed article. Computer code is another example of an output that can be challenging to measure quality and impact. Nobis notes, “We have tools, but they are not doing their job properly.” She emphasizes the importance of taking a step back to examine what information is and is not captured by different indicators.

A facilitator for change on campus

Iowa State University is a land grant university, meaning that part of its mission is to create and share knowledge for Iowans and the broader public. Brundy sees this as a core library value too. He described librarianship as a value-based profession, which aligns with the aspirations of research assessment reform. As a centralized resource on campus, Brundy believes that libraries are in a unique position to facilitate change through outreach and relationship building. For his part, he has been collaborating with the vice-provost office to organize conversations about responsible research assessment on campus. At the Illinois Institute of Technology, Savage started a dialogue on research assessment with the university faculty council and outreach to other deans.

Institutional change takes a strong coalition. In getting started, both Brundy and Savage introduced DORA as an action item for discussion on campus. Brundy brought the discussion about DORA to his colleagues at the library; Savage started with the university faculty council. It’s okay if there is some resistance, Savage says, because the main goal is to start a dialogue to identify what parts of the system are not working for researchers. And because most academics are pressed for time, any facilitation done by libraries needs to be intentional. The good practices on DORA’s webpage help frame conversations, Savage noted, because you can learn from other organizations.

Influence of collections

The emphasis on journal prestige has a direct effect on library collections. Libraries purchase subscriptions to scholarly journals. And rewarding researchers based on where they publish drives up the cost of subscriptions. For example, Papachristopoulos shared how one faculty member wanted access to a journal because of its reputation, even though the subscription had not been utilized by researchers in the previous two years. Based on the current trends, Savage predicts that traditional collections practices will become unsustainable in the next 5 – 10 years. Libraries pay the cost of prestige culture. And when they have to buy journal subscriptions based on notions of prestige, it undercuts core library principles of openness, equity, and valuing other outputs.

Action items

So what can libraries do right now? In his experience promoting responsible assessment, Brundy found that passive strategies like creating library guides are nominally effective. But there is an opportunity for libraries to rethink how to frame metrics assistance in the background of research assessment. Papachristopoulos recommends educating academics about tools and concepts, including ORCID ID, persistent identifiers, and data reusability, which encourage a more holistic view of research assessment. Surveys can be used to reach a wide audience, an approach taken by the Illinois Institute of Technology and the University of Cambridge to better understand views about responsible research assessment on campus.

Brundy suggests a staged approach. After convincing his library to sign DORA and become an official DORA supporter, he has now set his sights on the university. This taps into the facilitator role that libraries can play through relationship building. Savage agrees with this type of bottom-up approach, because it helps build a broad coalition of support.